Givens method

| Givens (rotation) method for the QR decomposition of a square matrix | |

| Sequential algorithm | |

| Serial complexity | 2 n^3 |

| Input data | n^2 |

| Output data | n^2 |

| Parallel algorithm | |

| Parallel form height | 11n-16 |

| Parallel form width | O(n^2) |

Primary authors of this description: Alexey Frolov.

Contents

- 1 Properties and structure of the algorithm

- 1.1 General description of the algorithm

- 1.2 Mathematical description of the algorithm

- 1.3 Computational kernel of the algorithm

- 1.4 Macro structure of the algorithm

- 1.5 Implementation scheme of the serial algorithm

- 1.6 Serial complexity of the algorithm

- 1.7 Information graph

- 1.8 Parallelization resource of the algorithm

- 1.9 Input and output data of the algorithm

- 1.10 Properties of the algorithm

- 2 Software implementation of the algorithm

- 3 References

1 Properties and structure of the algorithm

1.1 General description of the algorithm

Givens method (which is also called the rotation method in the Russian mathematical literature) is used to represent a matrix in the form A = QR, where Q is a unitary and R is an upper triangular matrix[1]. The matrix Q is not stored and used in its explicit form but rather as the product of rotations. Each (Givens) rotation can be specified by a pair of indices and a single parameter.

colomn numbers: \begin{matrix} \ _{i-1}\quad _i\quad _{i+1} & \ & _{j-1}\ \ _j\quad _{j+1}\end{matrix}

T_{ij} = \begin{bmatrix} 1 & \cdots & 0\quad 0\quad 0 & \cdots & 0\quad 0\quad 0 & \cdots & 0 \\ \vdots & \ddots & \vdots & \vdots & \vdots & \vdots \\ 0 & \cdots & 1\quad 0\quad 0 & \cdots & 0\quad 0\quad 0 & \cdots & 0 \\ 0 & \cdots & 0\quad c\quad 0 & \cdots & 0\ -s\ 0 & \cdots & 0 \\ 0 & \cdots & 0\quad 0\quad 1 & \cdots & 0\quad 0\quad 0 & \cdots & 0 \\ \vdots & \vdots & \vdots & \ddots & \vdots & \vdots & \vdots \\ 0 & \cdots & 0\quad 0\quad 0 & \cdots & 1\quad 0\quad 0 & \cdots & 0 \\ 0 & \cdots & 0\quad s\quad 0 & \cdots & 0\quad c\quad 0 & \cdots & 0 \\ 0 & \cdots & 0\quad 0\quad 0 & \cdots & 0\quad 0\quad 1 & \cdots & 0 \\ \vdots & \vdots & \vdots & \vdots & \vdots & \ddots & \vdots \\ 0 & \cdots & 0\quad 0\quad 0 & \cdots & 0\quad 0\quad 0 & \cdots & 1 \\ \end{bmatrix}

In a conventional implementation of Givens method, this fact makes it possible to avoid using additional arrays by storing the results of decomposition in the array originally occupied by A. Various uses are possible for the QR decomposition of A. It can be used for solving a SLAE (System of Linear Algebraic Equations) Ax = b or as a step in the so-called QR algorithm for finding the eigenvalues of a matrix.

At each step of Givens method, two rows of the matrix under transformation are rotated. The parameter of this transformation is chosen so as to eliminate one of the entries in the current matrix. First, the entries in the first column are eliminated one after the other, then the same is done for the second column, etc., until the column n-1. The resulting matrix is R. The step of the method is split into two parts: the choice of the rotation parameter and the rotation itself performed over two rows of the current matrix. The entries of these rows located to the left of the pivot column are zero; thus, no modifications are needed there. The entries in the pivot column are rotated simultaneously with the choice of the rotation parameter. Hence, the second part of the step consists in rotating two-dimensional vectors formed of the entries of the rotated rows that are located to the right of the pivot column. In terms of operations, the update of a column is equivalent to multiplying two complex numbers (or to four multiplications, one addition and one subtraction for real numbers); one of these complex numbers is of modulus 1. The choice of the rotation parameter from the two entries of the pivot column is a more complicated procedure, which is explained, in particular, by the necessity of minimizing roundoff errors. The tangent t of half the rotation angle is normally used to store information about the rotation matrix. The cosine c and the sine s of the rotation angle itself are related to t via the basic trigonometry formulas

c = (1 - t^2)/(1 + t^2), s = 2t/(1 + t^2)

It is the value t that is usually stored in the corresponding array entry.

1.2 Mathematical description of the algorithm

In order to obtain the QR decomposition of a square matrix A, this matrix is reduced to the upper triangular matrix R (where R means right) by successively multiplying A on the left by the rotations T_{1 2}, T_{1 3}, ..., T_{1 n}, T_{2 3}, T_{2 4}, ..., T_{2 n}, ... , T_{n-2 n}, T_{n-1 n}.

Each T_{i j} specifies a rotation in the two-dimensional subspace determined by the i-th and j-th components of the corresponding column; all the other components are not changed. The rotation is chosen so as to eliminate the entry in the position (i, j). Zero vectors do not change under rotations and identity transformations; therefore, the subsequent rotations preserve zeros that were earlier obtained to the left and above the entry under elimination.

At the end of the process, we obtain R=T_{n-1 n}T_{n-2 n}T_{n-2 n-1}...T_{1 3}T_{1 2}A.

Since rotations are unitary matrices, we naturally have Q=(T_{n-1 n}T_{n-2 n}T_{n-2 n-1}...T_{1 3}T_{1 2})^* =T_{1 2}^* T_{1 3}^* ...T_{1 n}^* T_{2 3}^* T_{2 4}^* ...T_{2 n}^* ...T_{n-2 n}^* T_{n-1 n}^* and A=QR.

In the real case, rotations are orthogonal matrices; hence, Q=(T_{n-1 n}T_{n-2 n}T_{n-2 n-1}...T_{1 3}T_{1 2})^T =T_{1 2}^T T_{1 3}^T ...T_{1 n}^T T_{2 3}^T T_{2 4}^T ...T_{2 n}^T ...T_{n-2 n}^T T_{n-1 n}^T.

To complete this mathematical description, it remains to specify how the rotation T_{i j} is calculated [2] and list the formulas for rotating the current intermediate matrix.

Let the matrix to be transformed contain the number x in its position (i,i) and the number y in the position (i,j). Then, to minimize roundoff errors, we first calculate the uniform norm of the vector z = max (|x|,|y|).

If the norm is zero, then no rotation is required: t=s=0, c=1.

If z=|x|, then we calculate y_1=y/x and, next, c = \frac {1}{\sqrt{1+y_1^2}}, s=-c y_1, t=\frac {1-\sqrt{1+y_1^2}}{y_1}. The updated value of the entry (i,i) is x \sqrt{1+y_1^2}.

If z=|y|, then we calculate x_1=x/y and, next, t=x_1 - x_1^2 sign(x_1), s=\frac{sign(x_1)}{\sqrt{1+x_1^2}}, c = s x_1. The updated value of the entry (i,i) is y \sqrt{1+x_1^2} sign(x).

Let the parameters c and s of the rotation T_{i j} have already been obtained. Then the transformation of each column located to the right of the i-th column can be described in a simple way. Let the k-th column have x as its component i and y as its component j. The updated values of these components are cx - sy and sx + cy, respectively. This calculation is equivalent to multiplying the complex number with the real part x and the imaginary part y by the complex number (c,s).

1.3 Computational kernel of the algorithm

The computational kernel of this algorithm can be thought of as compiled of two types of operation. The first type concerns the calculation of rotation parameters, while the second deals with the rotation itself (which can equivalently be described as the multiplication of two complex numbers with one of the factors having the modulus 1).

1.4 Macro structure of the algorithm

The operations related to the calculation of rotation parameters can be represented by a triangle on a two-dimensional grid, while the rotation itself can be represented by a pyramid on a three-dimensional grid.

1.5 Implementation scheme of the serial algorithm

In a conventional implementation scheme, the algorithm is written as the successive elimination of the subdiagonal entries of a matrix beginning from its first column and ending with the penultimate column (that is, column n-1). When the i-th column is "eliminated", then its components i+1 to n are successively eliminated.

The elimination of the entry (j, i) consists of two steps: (a) calculating the parameters for the rotation T_{ij} that eliminates the entry (j, i); (b) multiplying the current matrix on the left by the rotation T_{ij}.

1.6 Serial complexity of the algorithm

The complexity of the serial version of this algorithm is basically determined by the mass rotation operations. If possible sparsity of a matrix is ignored, these operations are responsible (in the principal term) for n^3/3 complex multiplications. In a straightforward complex arithmetic, this is equivalent to 4n^3/3 real multiplications and 2n^3/3 real additions/subtractions.

Thus, in terms of serial complexity, Givens method is qualified as a cubic complexity algorithm.

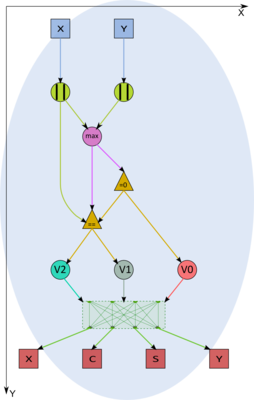

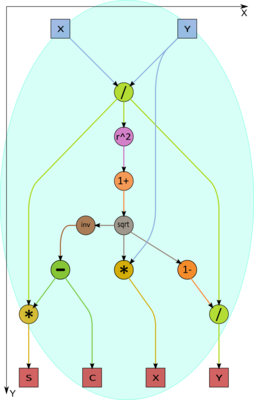

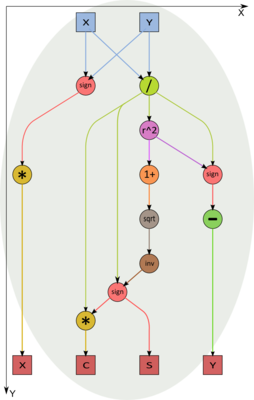

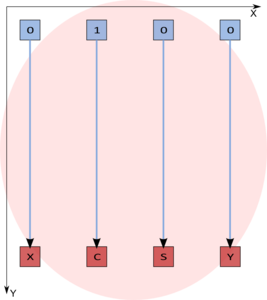

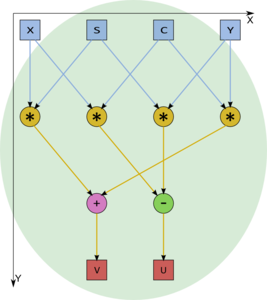

1.7 Information graph

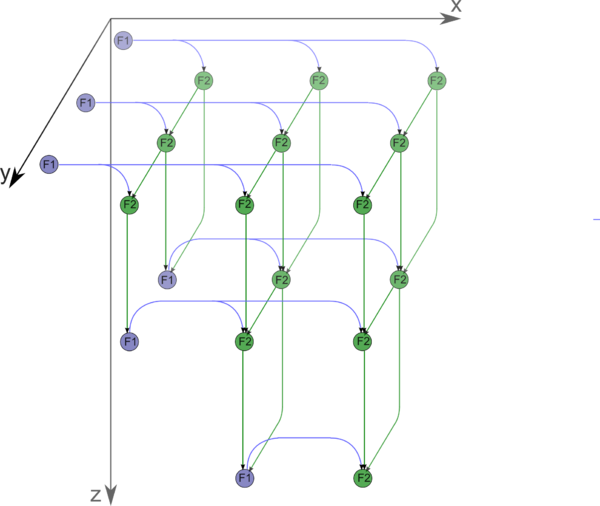

The macrograph of the algorithm is shown in fig. 1, while the graphs of the macrovertices are depicted in the subsequent figures.

1.8 Parallelization resource of the algorithm

In order to better understand the parallelization resource of Givens' decomposition of a matrix of order n, consider the critical path of its graph.

It is evident from the description of subgraphs that the macrovertex F1 (calculation of the rotation parameters) is much more "weighty" than the rotation vertex F2. Namely, the critical path of a rotation vertex consists of only one multiplication (there are four multiplications, but all of them can be performed in parallel) and one addition/subtraction (there are two operations of this sort, but they also are parallel). On the other hand, in the worst case, a macrovertex for calculating rotation parameters has the critical path consisting of a single square root calculation, two divisions, two multiplications, and two additions/subtractions.

According to a rough estimate, the critical path goes through 2n-3 macrovertices of type F1 (calculating rotation parameters) and n-1 rotation macrovertices. Altogether, this yields a critical path passing through 2n-3 square root extractions, 4n-6 divisions, 5n-7 multiplications, and the same number of additions/subtractions. In the macrograph shown in the figure, one of the critical paths consists of the passage through the upper line of F1 vertices (there are n-1 vertices) accompanied by the alternate execution of F2 and F1 (n-2 times) and the final execution of F2.

Thus, unlike in the serial version, square root calculations and divisions take a fairly considerable portion of the overall time required for the parallel variant. The presence of isolated square root calculations and divisions in some layers of the parallel form can also create other problems when the algorithm is implemented for a specific architecture. Consider, for instance, an implementation for PLDs. Other operations (multiplications and additions/subtractions) can be pipelined, which also saves resources. On the other hand, isolated square root calculations take resources that are idle most of the time.

In terms of the parallel form height, the Givens method is qualified as a linear complexity algorithm. In terms of the parallel form width, its complexity is quadratic.

1.9 Input and output data of the algorithm

Input data: dense square matrix A (with entries a_{ij}).

Size of the input data: n^2.

Output data: upper triangular matrix R (in the serial version, the nonzero entries r_{ij} are stored in the positions of the original entries a_{ij}), unitary (or orthogonal) matrix Q stored as the product of rotations (in the serial version, the rotation parameters t_{ij} are stored in the positions of the original entries a_{ij}).

Size of the output data: n^2.

1.10 Properties of the algorithm

It is clearly seen that the ratio of the serial to parallel complexity is quadratic, which is a good incentive for parallelization.

The computational power of the algorithm, understood as the ratio of the number of operations to the total size of the input and output data, is linear.

Within the framework of the chosen version, the algorithm is completely determined.

The roundoff errors in Givens (rotations) method grow linearly, as they also do in Householder (reflections) method.

2 Software implementation of the algorithm

2.1 Implementation peculiarities of the serial algorithm

In its simplest version, the QR decomposition of a real square matrix by Givens method can be written in Fortran as follows:

DO I = 1, N-1

DO J = I+1, N

CALL PARAMS (A(I,I), A(J,I), C, S)

DO K = I+1, N

CALL ROT2D (C, S, A(I,K), A(J,K))

END DO

END DO

END DO

Suppose that the translator at hand competently implements operations with complex numbers. Then the rotation subroutine ROT2D can be written as follows:

SUBROUTINE ROT2D (C, S, X, Y)

COMPLEX Z

REAL ZZ(2)

EQUIVALENCE Z, ZZ

Z = CMPLX(C, S)*CMPLX(X,Y)

X = ZZ(1)

Y = ZZ(2)

RETURN

END

or

SUBROUTINE ROT2D (C, S, X, Y)

ZZ = C*X - S*Y

Y = S*X + C*Y

X = ZZ

RETURN

END

(from the viewpoint of the algorithm graph, these subroutines are equivalent).

The subroutine for calculating the rotation parameters can have the following form:

SUBROUTINE PARAMS (X, Y, C, S)

Z = MAX (ABS(X), ABS(Y))

IF (Z.EQ.0.) THEN

C OR (Z.LE.OMEGA) WHERE OMEGA - COMPUTER ZERO

C = 1.

S = 0.

ELSE IF (Z.EQ.ABS(X))

R = Y/X

RR = R*R

RR2 = SQRT(1+RR)

X = X*RR2

Y = (1-RR2)/R

C = 1./RR2

S = -C*R

ELSE

R = X/Y

RR = R*R

RR2 = SQRT (1+RR)

X = SIGN(Y, X)*RR

Y = R - SIGN(RR,R)

S = SIGN(1./RR2, R)

C = S*R

END IF

RETURN

END

In the above implementation, the rotation parameter t is written to a vacant location (the entry of the modified matrix with the corresponding indices is known to be zero). This makes it possible to readily reconstruct rotation matrices (if required).

2.2 Possible methods and considerations for parallel implementation of the algorithm

On cluster-like supercomputers, a partitioning of an algorithm (with the usage of coordinate generalized schedule of the algorithm graph) and its distribution between different nodes (MPI) are fairly possible. At the same time, the inner parallelism in blocks will partially realize multi-core node capabilities (OpenMP) and even core superscalarity. This should be the aim of an implementation judging by the structure of the graph algorithm.

The structure of the graph makes one think that a good scalability can be attained, although, at the moment, this guess cannot be verified through concrete implementations. The favorable factors are a good (linear) nature of the critical path and a good coordinate structure of generalized schedule, which permits a good block partitioning of the algorithm graph.

An unexpected result was obtained by a search through the available program packages[3]. Most of old (serial) packages contain a subroutine for the QR decomposition via Givens method. By contrast, new packages, intended for parallel execution, perform the QR decomposition solely on the basis of Householder (reflections) method. Meanwhile, parallel properties of the latter are inferior to those of Givens method. We attribute this fact to that Householder method can easily be written in terms of BLAS-procedures, and it does not require loop reordering (except for the case where a partitioning is used; however, in this case, the coordinate rather than skewed loop partitioning is applied). On the other hand, some loop rewriting is needed for the parallel implementation of Givens method because skewed parallelism should be applied to achieve the maximum degree in its parallelization. Thus, we cannot assess the scalability of this algorithm implementations for the reason that no such implementations exist at the moment.

2.3 Run results

2.4 Conclusions for different classes of computer architecture

The module for calculating rotation parameters has a complicated logical structure. Therefore, we recommend the reader not to use PLDs as accelerators of universal processors for the reason that a significant part of their equipment will be idle. Certain difficulties are also possible on architectures with universal processors; however, the general structure of the algorithm makes it possible to expect a good performance from such architectures. For instance, on cluster-like supercomputers, a partitioning of the algorithm and its distribution between different nodes are fairly possible. At the same time, the inner parallelism in blocks will partially realize multi-core node capabilities and even core superscalarity.

3 References

- ↑ V.V. Voevodin, Yu.A. Kuznetsov. Matrices and Computations. Moscow, Nauka, 1984 (in Russian).

- ↑ V.V. Voevodin. Computational Foundations of Linear Algebra. Moscow, Nauka, 1977 (in Russian).

- ↑ A.V. Frolov, A.S. Antonov, Vl.V. Voevodin, A.M. Teplov. Comparison of different methods for solving the same problem on the basis of Algowiki methodology (in Russian) // Submitted to the conference "Parallel computational technologies (PaVT'2016)".