Horners method

| Horners method | |

| Sequential algorithm | |

| Serial complexity | 2 n^3 |

| Input data | n^2 |

| Output data | n^2 |

| Parallel algorithm | |

| Parallel form height | 11n-16 |

| Parallel form width | O(n^2) |

Main authors: Alexey Frolov.

Contents

- 1 Properties and structure of the algorithm

- 1.1 General description of the algorithm

- 1.2 Mathematical description of the algorithm

- 1.3 Computational kernel of the algorithm

- 1.4 Macro structure of the algorithm

- 1.5 Implementation scheme of the serial algorithm

- 1.6 Serial complexity of the algorithm

- 1.7 Information graph

- 1.8 Parallelization resource of the algorithm

- 1.9 Input and output data of the algorithm

- 1.10 Properties of the algorithm

- 2 Software implementation of the algorithm

- 3 References

1 Properties and structure of the algorithm

1.1 General description of the algorithm

1.1.1 Formulation of the problem

Horner’s scheme is devoted to the division of a polynomial P_n(x) with known coefficients by the binomial x - \alpha. The results of this operation are the coefficients of the polynomial Q_{n - 1}(x) obtained by the relation

- P_n(x) - P_n(\alpha) = (x - \alpha) Q_{n - 1}(x)

and the value P_n(\alpha) of the polynomial P_n(x) at a given point \alpha.

Unfortunately, Horner’s method is often reduced only to the calculation of a polynomial P_n(x) at the point \alpha.

1.1.2 General scheme

Actually, Horner’s scheme implements the long division of a polynomial by the binomial x - \alpha. By the Bezout theorem, the remainder of this operation is equal to the value of a polynomial P_n(x) at a point \alpha.

1.2 Mathematical description of the algorithm

Input data: a one-dimensional array consisting of n + 1 numbers a_k and a scalar \alpha.

Output data: a one-dimensional array consisting of n + 1 numbers b_k.

The formulas of Horner's method are based on the relation

- P_n(x) - P_n(\alpha) = (x - \alpha) Q_{n - 1}(x).

Now we represent the above polynomials in canonical form:

- \begin{align} P_n(x) & = a_0 x^n+ a_1 x^{n - 1} + \dots + a_{n - 1} x + a_n, \\ Q_{n - 1}(x) & = b_0 x^{n - 1} + b_1 x^{n - 2} + \dots + b_{n - 2} x + b_{n - 1}. \end{align}

By b_n we denote P_n(\alpha). Substituting these polynomials in their canonical form into the above relation and equating the coefficients at equal powers of x, we come to the following formulas of Horner’s scheme:

- \begin{align} b_0 & = a_0, \\ b_k & = a_k + \alpha b_{k - 1}, \quad k = 1, \dots, n. \end{align}

1.3 Computational kernel of the algorithm

The computational kernel of Horner’s algorithm in its serial version can be represented as a sequential set of n «double» operations: the multiplication of elements of the output array by one and the same scalar and the addition of the result to the next element of the input array.

1.4 Macro structure of the algorithm

From the computational kernel of Horner’s algorithm it follows that its major part consists of the sequential set of the above «double» operations.

1.5 Implementation scheme of the serial algorithm

The formulas of the algorithm are described above. The operations are performed in increasing order of k.

1.6 Serial complexity of the algorithm

For a polynomial of degree n, the number of multiplications is equal to n and the number of additions is also equal to n. Hence, Horner’s algorithm is of linear complexity with respect to the number of serial operations.

1.7 Information graph

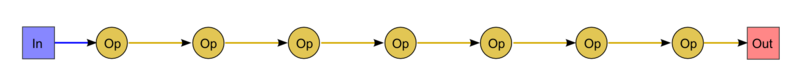

The graph of the algorithm is illustrated in Fig.1 for n = 9. As can be seen, the graph is serial.

Here the abbreviations In (Input) and Out (Output) are used to denote the first coefficient of the input array and the value of the polynomial at a given point \alpha, respectively. By Op we denote the "double" operation: the multiplication of an input variable by a scalar and the addition of the result to another variable.

1.8 Parallelization resource of the algorithm

The serial version of Horner’s algorithm has no parallelization resource. Its parallel form consists of a single layer and is coincident with the serial algorithm. Thus, the height of the parallel form is equal to n multiplications plus n additions. Hence, this algorithm is of linear complexity with respect to the height of the parallel form. The width of the parallel form is equal to 1; hence, this algorithm is of constant complexity with respect to the width of the parallel form.

1.9 Input and output data of the algorithm

Input data: an array a (its elements are denoted by a_i, where i = 0, \dots, n) and a scalar \alpha.

Additional constraints: absent.

Amount of input data: n + 2.

Output data: an array b (its elements are denoted by b_k, where k = 0, \dots, n)

Amount of output data: n + 1.

1.10 Properties of the algorithm

In the case of unlimited computer resources, the ratio of the serial complexity to the parallel complexity is constant (1). Computational power of the algorithm considered as the ratio of the number of operations to the total amount of input and output data is equal to 1 (the number of input and output data is even larger by 1 than the number of operations). The algorithm is completely deterministic. The arcs of the information graph are local. The stability of Horner’s scheme is optimal for the calculation of the values of a polynomial with known coefficient.

2 Software implementation of the algorithm

2.1 Implementation peculiarities of the serial algorithm

Horner’s scheme can be represented in Fortran as

b(0) = a(0)

DO I = 1, N

b(I) = a(I)+b(I-1)*alpha

END DO

2.2 Possible methods and considerations for parallel implementation of the algorithm

This algorithm is not designed to be implemented in parallel.

In addition to the above simplest implementation, there exist more primitive codes implementing only a part of Horner’s scheme. This can be explained by the fact that in many cases it is necessary to know the value of a polynomial (the remainder of division), but it is not necessary to know only the quotient polynomial with the remainder dropped. In such cases one and the same scalar should be used instead of all the elements of the array b.