Single-qubit transform of a state vector

| Single-qubit transform of a state vector | |

| Sequential algorithm | |

| Serial complexity | 3 \cdot 2^n |

| Input data | 2^n+4 |

| Output data | 2^n |

| Parallel algorithm | |

| Parallel form height | 2 |

| Parallel form width | 2^{n+1} |

Primary authors of this description: A.Yu.Chernyavskiy.

Contents

- 1 Properties and structure of the algorithm

- 1.1 General description of the algorithm

- 1.2 Mathematical description of the algorithm

- 1.3 The computational kernel of the algorithm

- 1.4 Macro structure of the algorithm

- 1.5 Implementation scheme of the serial algorithm

- 1.6 Serial complexity of the algorithm

- 1.7 Information graph

- 1.8 Parallelization resource of the algorithm

- 1.9 Input and output data of the algorithm

- 1.10 Properties of the algorithm

- 2 Software implementation of the algorithm

- 3 References

1 Properties and structure of the algorithm

1.1 General description of the algorithm

The algorithm simulates an action of a single-qubit quantum gate on a state vector. [1] [2] [3]

The operations with small number of qubits (quantum bits) are the basis of quantum computations. Moreover, it's known, that arbitrary quantum computation can be represented by only single- and two-qubit operations. Such operations are the analogues of simple classical logic operations (like NOT, AND, XOR, etc), but have some serious differences, which lead to the simulation difficulties. The main difference is in the fact that even single-qubit operation affects all other qubits due to the phenomena of quantum entanglement. Such "non-locality" gives the huge computational power to the quantum computers, but makes it very difficult to simulate them.

The algorithm of single-qubit transform simulation is often a subroutine and is used iteratively to the different qubits of a state. For example, it's used for the quantum algorithms simulation and the analysis of quantum entanglement. As for the most of the classical algorithms in quantum information science, the described algorithm shows the exponential data growth with respect to the number of qubits. This fact leads to the necessity of a supercomputer usage for the practically important tasks.

1.2 Mathematical description of the algorithm

Input data:

Integer parameters n (the number of qubits) and k (the number of the affected qubit).

A complex 2 \times 2 matrix U = \begin{pmatrix} u_{00} & u_{01}\\ u_{10} & u_{11} \end{pmatrix} of a single-qubit transform.

A complex 2^n-dimensional vector v, which denotes the initial state of a quantum system.

Output data: the complex 2^n-dimensional vector w, which corresponds to the quantum state after the transformation.

The single-qubit transform can be represented as follows:

The output state after the action of a transform U on the k-th qubit is v_{out} = I_{2^{k-1}}\otimes U \otimes I_{2^{n-k}}, where I_{j} is the j-dimensional identity matrix, and \otimes denotes the tensor (Kronecker) product.

However, the elements of the output vector can be represented in more computationally useful direct form:

- w_{i_1i_2\ldots i_k \ldots i_n} = \sum\limits_{j_k=0}^1 u_{i_k j} v_{i_1i_2\ldots j_k \ldots i_n} = u_{i_k 0} v_{i_1i_2\ldots 0_k \ldots i_n} + u_{i_k 1} v_{i_1i_2\ldots 1_k \ldots i_n}

A tuple-index i_1i_2\ldots i_n denotes the binary form of an array index.

1.3 The computational kernel of the algorithm

The computational kernel of the single-qubit transform can be composed of 2^n calculations of the elements of the output vector w. The computation of each element consists of two complex multiplication and one complex addition opearations. Moreover we need to compute an indexes i_1i_2\ldots 0_k \ldots i_n, and the i_k bits, these computations needs the bitwise operations.

1.4 Macro structure of the algorithm

As noted in the description of the computational kernel, the main part of the algorithm consists of the independent computations of the output vector elements.

1.5 Implementation scheme of the serial algorithm

For i from 0 to 2^n-1

- Calculate the element i_k of the binary representation of the index i.

- Calculate indexes j with binary representation i_1i_2\ldots \overline{i_k} \ldots i_n, where the overline denotes the bit-flip.

- Calculate w_i = u_{i_k i_k}\cdot v_{i} + u_{i_k \overline{i_k}}\cdot v_j.

1.6 Serial complexity of the algorithm

The following number of operations is needed for the single-qubit transform:

- 2^{n+1} complex multiplications;

- 2^n complex additions;

- 2^n k-th bit reads;

- 2^n k-th bit writes.

Note, that the algorithm are often used iteratively, that's the the bit operations (3-4) can be performed once in the beginning of computations. Moreover, they can be avoided by the usage of additions and bitwise multiplications with the 2^k, that can be also calculated once for each k.

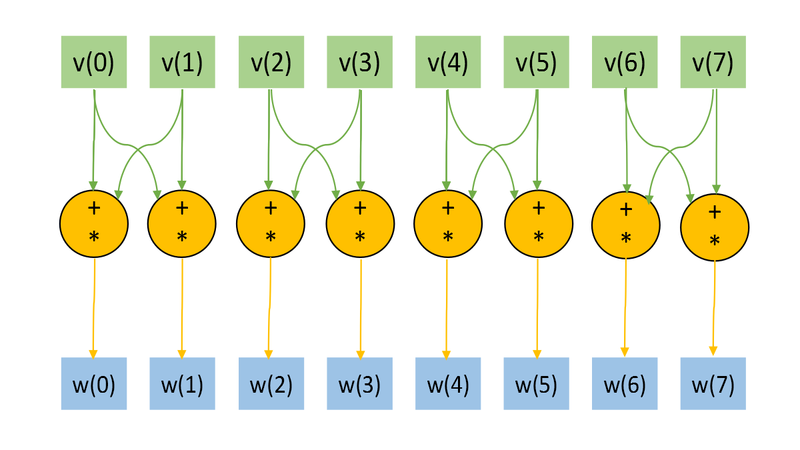

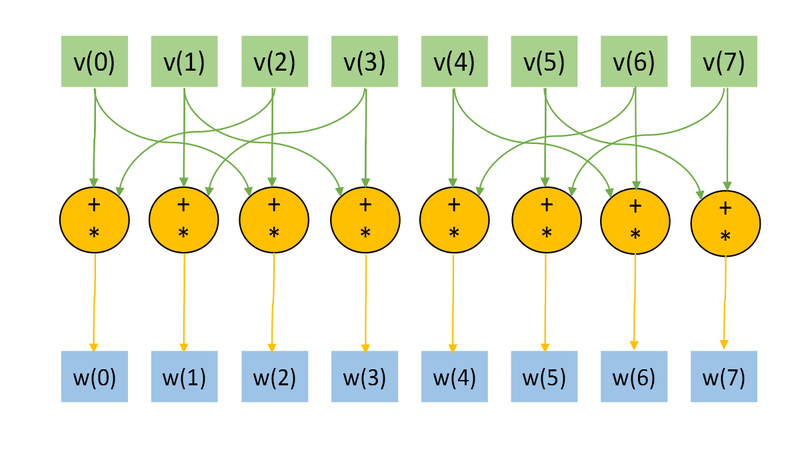

1.7 Information graph

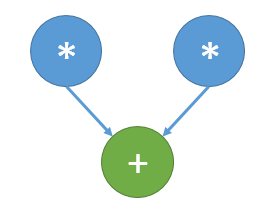

The Figs. 1 and 2 shows the information graph for the paramters n=3, k=1 and n=3, k=2. The graphs are presented without a transformation matrix U, because its small size in comparison with a input and output data. Fig.3 shows the main operation, which is denoted by orange on Figs.1-2.

The representation with an input and data and the "folded" triple operation is useful for the understanding of the memory locality. Note, the the structure of an information graph strongly depends on the parameter k.

1.8 Parallelization resource of the algorithm

As we can see on the information graph, the direct algorithm of one-qubit transform simulation has the very high parallelization resource, because all computations of different output vector elements can be made independently. Two multiplication operations needed for the computation of each output vector element also can be done in parallel.

So, there are only two levels of computations:

- 2^{n+1} multiplications.

- 2^n additions.

1.9 Input and output data of the algorithm

Input data:

- 2^n-dimensional complex state vector u. In most cases thу vector is normalized to 1.

- 2-dimensional unitary matrix U.

- The number (index) of the affected qubit q.

Output data:

- The 2^n-dimensional complex output state vector w..

Amount of input data: 2^n+4 complex numbers and 1 integer parameter.

Amount of output data: 2^n complex numbers.

1.10 Properties of the algorithm

The ratio of the serial complexity to the parallel complexity is exponential (an exponent transforms to a constant).

The computational power of the algorithm considered as the ratio of the number of operations to the amount of input and output data is constant.

2 Software implementation of the algorithm

2.1 Implementation peculiarities of the serial algorithm

The function of the single-qubit transform may be represented in C language as:

void OneQubitEvolution(complexd *in, complexd *out, complexd U[2][2], int nqubits, int q)

{

//n - number of qubits

//q - index of the affected qubit

int shift = nqubits-q;

//All bits are zeros, except the bit correspondent to the affected qubit.

int pow2q=1<<(shift);

int N=1<<nqubits;

for (int i=0; i<N; i++)

{

//Making the changing bit zero

int i0 = i & ~pow2q;

//Setting the changing bit value

int i1 = i | pow2q;

//Getting the changing bit value

int iq = (i & pow2q) >> shift;

out[i] = U[iq][0] * in[i0] + U[iq][1] *in[i1];

}

}

Note, that the huge part of the computation and code logic consists of the bit operations, but this can be avoided. In most cases, single-qubit transform is just as a subroutine and is used sequentially many times. Moreover, indexes i0, i1, and iq depends only on the parameters n and q. The n is fixed, and we can calculate these indexes in the beginning of computations for every parameter q, and we need only 3n integers to store the data, when the input and output data growths exponentially. It's obvious that such optimization is critically needed for the implementations of the algorithm for the hardware and software with slow bit operations (for example, Matlab).

2.2 Possible methods and considerations for parallel implementation of the algorithm

The main method of the parallelization is obvious: we need to parallelize the main loop (independent computations of the elements of the output vector), and possibly parallelize in-loop multiplications, which are independent too, as can bee seen from Fig.3. For shared memory systems such method leads to the near ideal speed-up. However, for the distributed memory machines the algorithm has the unsolvable problems with data transfer, that can be seen (for example, from Fig. 8), for big tasks (with the big number of qubits n), the the amount of transfer operations becomes comparable to the arithmetical operation. The possible particular solution is the usage of caching or different programming paradigms like SHMEM. But such unlocality makes it impossible to achieve ideal efficiency on distributed memory machines.

The algorithm is implemented (mostly serial versions) in different libraries for quantum information science and quantum computer simulation. For example: QLib, libquantum, QuantumPlayground, LIQUiD.

2.3 Run results

2.4 Conclusions for different classes of computer architecture

Because of the very high parallelization possibility, but low locality, the effective and good scalable parallel implementation of the algorithm can be achieved for the shared memory. But the usage of distributed memory leads to the efficiency problems and necessity of special tricks for the optimization. It may be noted, that this task is a very good test platform for the development of methods for the data intense tasks with bad locality.

3 References

- ↑ Kronberg D. A., Ozhigov Yu. I., Chernyavskiy A. Yu. Algebraic tools for quantum information. (In Russian)

- ↑ Preskill J. Lecture notes for physics 229: Quantum information and computation //California Institute of Technology. – 1998.

- ↑ Nielsen, Michael A., and Isaac L. Chuang. Quantum computation and quantum information. Cambridge university press, 2010.