One step of the dqds algorithm

| The dqds algorithm for computing singular values of bidiagonal matrix | |

| Sequential algorithm | |

| Serial complexity | 5n-4 |

| Input data | 2n |

| Output data | 2n |

| Parallel algorithm | |

| Parallel form height | 4n-3 |

| Parallel form width | 2 |

Primary authors of this description: A.Yu.Chernyavskiy

Contents

- 1 Properties and structure of the algorithm

- 1.1 General description of the algorithm

- 1.2 Mathematical description of the algorithm

- 1.3 Computational kernel of the algorithm

- 1.4 Macro structure of the algorithm

- 1.5 Implementation scheme of the serial algorithm

- 1.6 Serial complexity of the algorithm

- 1.7 Information graph

- 1.8 Parallelization resource of the algorithm

- 1.9 Input and output data of the algorithm

- 1.10 Properties of the algorithm

- 2 Software implementation of the algorithm

- 3 References

1 Properties and structure of the algorithm

1.1 General description of the algorithm

The dqds algorithm iteration implements one step of the dqds algorithm for calculating the singular values of a bi-diagonal matrix.

The full dqds algorithm (differential quotient-difference algorithm with shifts)[1][2] constructs a sequence of bi-diagonal matrices that converges to a diagonal matrix containing the squares of the required singular values. It has the merit of calculating the singular values to high accuracy.

The computational kernel of the algorithm is its inner iteration. Outside of the iterations, shifts \delta (iteration parameters, see mathematical description of the algorithm) are chosen, convergence is looked after, and various optimization tricks are applied. Note that the non-iterative part of the algorithm is not important with respect to the structure of calculations because the main computational costs fall on the calculations within the iterations. Details concerning the non-iterative part, its variants, and the analysis of convergence can be found in the related literature [3] [4] [5].

1.2 Mathematical description of the algorithm

1.2.1 Auxiliary information

In order to understand the mathematical background of dqds iteration, it is helpful to briefly discuss its derivation, which, to same extent, reflects the history of the algorithm (the details can be found in [1]). It is convenient to begin with the so-called LR iteration, which predates the well-known QR iteration. Starting from the initial symmetric positive definite matrix T_0\gt , the LR algorithm constructs a converging sequence of matrices T_i\gt 0 that are similar to T_0. In this construction, the following three steps are used iteratively:

- Choose a shift \tau_i that is less than the smallest eigenvalue of the matrix T_i.

- Compute the Cholesky decomposition T_i-\tau^2_iI=B_i^TB_i, where B_i is an upper triangular matrix with a positive diagonal.

- T_{i+1}=B_iB_i^T+\tau_i^2I.

Note that two steps of LR iteration with a zero shift are equivalent to a single step of QR iteration. This iterative procedure brings the matrix to diagonal form, calculating thereby the eigenvalues of the original matrix. It is easy to reformulate the LR algorithm for the problem of calculating the singular values of bi-diagonal matrices, and, for this problem, the algorithm becomes noticeably simpler. Namely, let us calculate a sequence of bi-diagonal matrices B_i avoiding a direct calculation of matrices T_i (which, in this case, are tri-diagonal). Let B_i have the diagonal entries a_1 \ldots a_n and the super-diagonal entries b_1 \ldots b_{n-1}, while B_{i+1} has the diagonal entries \widehat{a}_1 \ldots \widehat{a}_n and the super-diagonal entries \widehat{b}_1 \ldots \widehat{b}_{n-1}. Then, in terms of the matrices B_i, one step of LR iteration can be reduced to a simple loop over j running from 1 to n-1:

- \widehat{a}^2_j = a^2_j+b^2_j-\widehat{b}^2_{j-1}-\delta

- \widehat{b}^2_j = b^2_j\dot (a^2_{j+1}/a^2_j),

which is completed by the calculation of \widehat{a}^2_n = a^2_n-\widehat{b}^2_{n-1}-\delta. The extraction of square roots is obviously better to postpone until the algorithm terminates; hence, one can set q_j=a^2_j,\; e_j=b^2, which ultimately yields the so-called qds algorithm. It is given by the following formulas:

- \widehat{q}_j = q_j + e_j - \widehat{e}_{j-1} - \delta, \quad j \in [1,n-1]

- \widehat{e}_j = e_j \cdot q_{j+1} / \widehat{q}_j, \quad j \in [1,n-1]

- \widehat{q}_n = q_n - \widehat{e}_{n-1} - \delta.

Here, </math> q_j, \; j \in [1,n] and e_j, \; j \in [1,n-1] are the squares of the diagonal and super-diagonal entries, respectively. The circumflexes mark the output variables, and the symbol \delta denotes the shift (which is a parameter of the algorithm). This is the most compact formulation of the so-called qds iteration.

1.2.2 Mathematical description of dqds iteration

Let us give the mathematical formulation of dqds iteration (from a mathematical viewpoint, qds and dqds iterations are equivalent), using the auxiliary variables t_j and d_j. One dqds iteration transforms the input bi-diagonal matrix B to the output matrix \widehat{B}.

Input and output data: q_{j}, \; j\in [1,n], \; e_{k}, \; k\in [1,n-1] are the squares of the diagonal and super-diagonal entries of the input matrix B; \widehat{q}_j , \; \widehat{e}_k have the same meaning for the matrix \widehat{B} to be calculated.

The method is given by the following formulas:

- d_1 = q_1 - \delta, \; q_n = d_n

- для \quad j\in [1,n-1]:

- \widehat{q}_j = d_j + e_j

- t_j = q_{j+1}/\widehat{q}_j

- \widehat{e}_j=e_j \cdot t_j

- d_{j+1} = d \cdot t - \delta

1.3 Computational kernel of the algorithm

The computational kernel of the algorithm consists in the successive calculation of the squares of the diagonal (\widehat{q}_j) and super-diagonal (\widehat{e}_k) entries of the output matrix. In view of the use of auxiliary variables, the calculation of each new pair requires one addition, one subtraction, one division, and two multiplications.

1.4 Macro structure of the algorithm

The algorithm consists of the separate calculation of the initial value of the auxiliary variable d, the subsequent execution (repeated (n-1) times) of the sequence of 5 operations (+,/,*,*,-) for calculating the squares of the diagonal (\widehat{q}_j) and super-diagonal (\widehat{e}_k) entries of the output matrix, and the concluding calculation of the last value \widehat{q}_n.

1.5 Implementation scheme of the serial algorithm

Note that the output data can right away be written to the locations of the old data (which is used in the scheme). To store the auxiliary variables t_j and d_j, it is sufficient to have two rewritable variables. Thus, the diagonal (q_j) and super-diagonal (e_k) entries of the input matrix are successively replaced by the corresponding entries of the output matrix.

The operations in the method are performed in the following order:

1. Compute the initial value of the auxiliary variable d = q_1-\delta.

2. Perform a loop over j from 1 to n-1. It consists of the following:

- 2.1 Compute the value q_j = d + e_j;

- 2.2 Compute the value of the auxiliary variable t = q_{j+1}/q_j;

- 2.3 Compute the value e_j = e_j \cdot t;

- 2.4 Compute the value of the auxiliary variable d = d \cdot t - \delta.

3. Compute q_n = d.

These calculations can be organized in a different form, for instance, in the form of qds iteration (see Mathematical description of dqds iteration). However, it is dqds implementation that makes it possible to attain high accuracy [1].

1.6 Serial complexity of the algorithm

In order to perform one dqds iteration, it is necessary to execute

- n-1 divisions,

- 2n-2 multiplications,

- 2n-1 additions/subtractions.

Thus, one dqds iteration has a linear complexity.

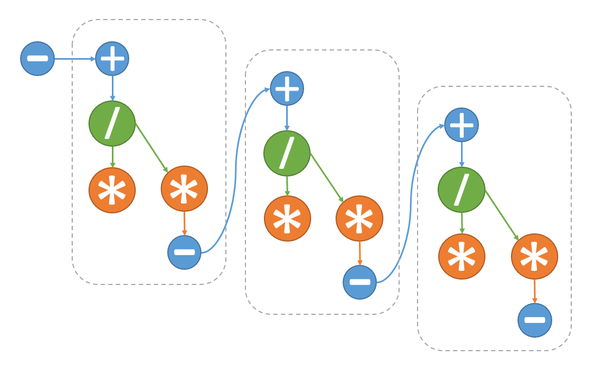

1.7 Information graph

1.8 Parallelization resource of the algorithm

The information graph of the algorithm shows that, at each step of the main loop, only multiplication (2.2) and multiplication + addition (2.4) can be executed in parallel. This makes it possible to reduce the number of layers per loop iteration from 5 to 4 and the overall number of layers in the algorithm from 5n-4 to 4n-3. The layers with multiplications contain two operations, while the other layers contain only one operation.

1.9 Input and output data of the algorithm

Input data: The squares of the diagonal and super-diagonal entries of the input bi-diagonal matrix (the vector q of length n and the vector e of length n-1) and the shift parameter \delta.

Size of the input data: 2n.

Output data: The squares of the diagonal and super-diagonal entries of the output bi-diagonal matrix.

Size of the output data: 2n-1.

1.10 Properties of the algorithm

If the parallel execution of multiplications is possible, then the ratio of the serial to parallel complexity is \frac{5n-4}{4n-3}, that is, the algorithm is poorly parallelizable.

The computational power of the algorithm, understood as the ratio of the number of operations to the total size of the input and output data, is a constant.

The algorithm is completely determined.

2 Software implementation of the algorithm

2.1 Implementation peculiarities of the serial algorithm

In the Matlab language, the algorithm can be written as follows:

d = q(1)-delta;

for j = 1:n-1

q(j)=d+e(j);

t=q(j+1)/q(j);

e(j) = e(j)*t;

d = d*t-delta;

end

q(n) = d;

As already said in the section Implementation scheme of the serial algorithm, the calculated values are written to the locations of the input data.

2.2 Possible methods and considerations for parallel implementation of the algorithm

The dqds iteration is practically entirely serial. The only possibility for parallelization is the simultaneous execution of multiplication (2.2) and multiplication + addition (2.4), which yields a small gain in performance.

The dqds algorithm is implemented as the function xBDSQR of the LAPACK package. It is executed when this function is called with the option of calculating singular vectors not activated.

2.3 Результаты прогонов

2.4 Conclusions for different classes of computer architecture

An efficient implementation of the algorithm is only possible on a computer facility with one core or two.

3 References

- ↑ Jump up to: 1.0 1.1 1.2 Demmel. D. Applied numerical linear algebra. 1997.

- ↑ Hogben L. (ed.). Handbook of linear algebra. – CRC Press, 2006.

- ↑ Fernando K. V., Parlett B. N. Accurate singular values and differential qd algorithms //Numerische Mathematik. – 1994. – Т. 67. – №. 2. – С. 191-229.

- ↑ Parlett B. N., Marques O. A. An implementation of the dqds algorithm (positive case) //Linear Algebra and its Applications. – 2000. – Т. 309. – №. 1. – С. 217-259.

- ↑ Aishima K. et al. On convergence of the DQDS algorithm for singular value computation //SIAM Journal on Matrix Analysis and Applications. – 2008. – Т. 30. – №. 2. – С. 522-537.